Tuesday, 10 November 2009

Do you have a data model of your production schema?

In total I had 36 votes for the poll with the question Do you have a data model of your production schema? and the results are as follows.

* 33% answered 'YES'.

* 58% answered 'NO'

* 8% answered 'I don't know'

What does this tell us?

I know the sample of 36 is too small almost irrelevant, but what is the right size anyway?

By looking at these results can we say that people in majority 58% do not know the data model of their databases?

I suppose as long as the database is performing well and there is enough space to accommodate whatever is thrown into the database, all is jolly and fine and nobody complains. Especially with technologies such as Hibernate where the data model is derived and implemented automatically from the object model persistence layer!? Who cares about Primary Keys and Foreign Keys, is this the end of databases? Is this how I should interpret this? I am not sure.

Next, what can we say for the second group of voters who are the 33% and who said, Yes! Are these voters in a stronger position than the voters of the 58%? Does this mean that this group does know their data model and do understand the relationships between their entities, and therefore command the SQL and either as a DBA or Developer are able to write better SQL scripts and much more high performance queries and code. Once again I am not sure.

Last the 8% who said 'I don't know', were they just casual visitors who just wanted to show their indifference in data modeling but at the same time, by voting chose to show that they were still involved with database systems? Again I don't know.

What I know is that all of you who voted gave me a small glimpse of what is out there and may I send you a BIG THANK YOU for that!

Thursday, 23 July 2009

Library Cache Pin Waits during PL/SQL compilation

Did you face slowdown or other production issues because of this?

Did you hit an error similar to the following when you or a developer tried to compile PL/SQL procedure which is referenced by another constantly executing high load PL/SQL procedure?

ERROR at line 1:

ORA-04021: timeout occurred while waiting to lock object AZIMUTH.UPDATEME

If yes, then carry on reading.

This post is about Conncurrency Wait issues you might get when trying to compile highly used constantly executing PL/SQL code in your production database.

I have come accross this situation on an Oracle 10g R2 production platform. What hapenned was someone tried to quickly compile PL/SQL procedures which were executing 2 times in a second by many sessions. More this procedure was called by a dozen of other PL/SQL procedures that is there were lots of dependencies between packages, views. The PL/SQL procedure in question was basically the busiest piece of code in the database. Logging sessions and their events.

You have to be carefull when you want to compile such highly used constantly executing piece of PL/SQL code in your system, at run-time. The rule is, that objects cannot be changed when they are used. As the session you want to compile the code in tends to keep on waiting to obtain a Library Cache Pin latch from the shared pool. If you do go ahead and compile such code, without scheduling downtime, you will probably halt the system. Try it with re-compiling packages which have dependencies with multiple other packages which are constantly in use, if you dare on production, and see what happens. You will face concurrency waits. Oracle says in metalink that such behaviour is expected and that you have to schedule downtime and maintenance window for re-compiling heavily used PL/SQL. The solution seem to be not to write complex dependencies in PL/SQL or to arrange maintenance window for deployments of PL/SQL. That is what the support told me, they also asked me to use some undocumented "_???" parameters to help with the latches. But the maintenance window seems to be the easiest or maybe standby database configuration. Feel free to comment on this post, workarounds and better solutions you can suggest.

See some Metalink notes with good reading on the subject:

- Doc ID: 115656.1 WAIT SCENARIOS REGARDING LIBRARY CACHE PIN AND LIBRARY CACHE LOAD LOCK

- Doc ID: 1054939.6 COMPILATION OF PACKAGE IS HANGING ON LIBRARY CACHE LOCK AND LIBRARY CACHE

- Doc ID:264476.1 ORA-4021 'LIBRARY CACHE PIN' and PL/SQL blocks

- Doc ID:169139.1 How to analyze ORA-04021 or ORA-4020 errors?

I am trying to simulate the above Concurrency waits with the following procedures where I run lock_test by many sessions and then try to re-compile updateme. I do see the Concurrency Waits in V$SESSION_WAITS.

CREATE OR REPLACE

PROCEDURE updateme

AS

BEGIN

FOR i IN 1 .. 100000000

LOOP

UPDATE test SET names = 'x' WHERE names = 'b';

END LOOP;

ROLLBACK;

END;

CREATE OR REPLACE

PROCEDURE lock_test

AS

BEGIN

updateme;

END;

Saturday, 27 June 2009

Oracle Apex Hosting

Long time now, I have been looking for a web hosting company, where I could host my Oracle Apex pages in full and economically, and found one for £3.50 a month!

Oracle's free http://apex.oracle.com hosting platform is not enough as it doesn't allow you to make external network calls, i.e. calls to Google services like Google Maps.

If you want to create an Oracle Apex application which uses Google Maps or even use internet able PL/SQL packages such as UTL_HTTP on the internet, you have to look elsewhere as the free Oracle Apex hosting platform doesn't let you do that.

But don't worry because the Application Outsource UK LTD platform for £3.50 a month gives you 10MB storage and full Oracle Apex hosting with the ability to do external network calls!

I can't say how pleased I am with their service. Now I am able to do everything I couldn't do with Oracle's Free Apex hosting platform. They have a great support website too.

Have a look at my Oracle Apex and Google Maps demo application I have crated using their platform here on My Oracle Apex and Google Maps samples.

|

| Yahoo, Google API and Oracle Apex |

|

| Google maps API and Oracle Apex |

More Reading

A good starting document on Integrating Google Maps and Oracle Apex. This document gave me the inspiration to try Google Maps with Oracle Apex.

1. Integrating_Application_Express_with_Google_Maps.pdf.

2. Google API

Monday, 18 May 2009

Update one table from another using ORACLE MERGE INTO

Assume we have 2 tables as:

create table t1

(

id number primary key not null,

name varchar2(50)

);

create table t2

(

id number primary key not null,

job varchar2(50)

);

insert into t1 values (1, 'Kubilay');

insert into t1 values (2, 'Robin');

insert into t1 values (3, 'Kenny');

select * from t1;

ID NAME

---------------------- --------------------------------------------------

1 Kubilay

2 Robin

3 Kenny

3 rows selected

insert into t2 values (1, 'DBA');

insert into t2 values (2, 'SA');

insert into t2 values (3, 'Developer');

select * from t2;

ID JOB

---------------------- --------------------------------------------------

1 DBA

2 SA

3 Developer

3 rows selected

And now we want to create a new column in t1 and move the data which is matching (primary key to primary key) from table t2 to table t1.

alter table t1 add job varchar2(60);

Use MERGE INTO to update table t1 with data from table t2.

merge into t1

using t2

on (t1.id = t2.id)

when matched

then update set

t1.job = t2.job;

select * from t1;

ID NAME JOB

---------------------- -------------------------------------------------- ------------------------------------------------------------

1 Kubilay DBA

2 Robin SA

3 Kenny Developer

3 rows selected

As you can see in the link you can use the MERGE statement to insert new rows like UPSERT when data in table t2 doesn't match with t1. That is, an Oracle UPSERT statement!

Thursday, 9 April 2009

Upgrading to 11g, a technical workshop seminar by Mike Dietrich

This was one of the best Oracle Events I have attended.

Mike run us through his 400+ slides of tips & tricks and best practices when upgrading to Oracle 11g. He also presented 3 real life cases in which he helped 3 big Oracle customers during their upgrade to Oracle 11g. The contents of the slides are very good and the presentation skills of Mike were excellent. In this post there is a link to the slides. The slides are quite big in size so you have to download them in 3 parts (about 8MB in total). Here are the slides: Oracle 11g Upgrade Workshop presentation

Workshop presentation highlights

- Better support to the optimizer during upgrade.

- Definitely apply the timezone patch, you can't upgrade without it.

- Recalculate statistics before upgrade.

- If you stay on the host use dbua.

- If you move host use command line upgrade.

- Provision for performance degradation, do tests, tests and tests.

It seems when it comes to upgrades in Oracle, people will always wait for R2. Mike said that Oracle is well aware of this and that with 11g they tried to break this taboo by explaining to us how Oracle actually are not introducing anything special as bug fixes in R2. People will see this themselves when they see how many little bugs will be fixed in R2.

From the presentation I also got the impression that you can upgrade to 11g, it is easy takes little time. But the performance implications the upgrade might introduce are still uncontrollable if you don't have diagnostic & tuning pack license purchased (£1777 each per CPU) . A Diagnostic packs license designed to show and sort out automatically all SQL statements which will regress! If you don't have diagnostic pack purchased, your DBA might end up dealing for days with hundreds of ''Regressed'' SQL statements because of the upgrade. Whereas the diagnostics package deals with it in hours. The DBA poor chap! (Hopefully Not!)

Thursday, 2 April 2009

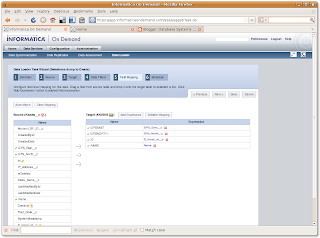

"Informatica on Demand" an ETL tool running in the Cloud

How is that possible? First thing which comes to mind is API, Java, Import & Export and custom code, code and code.

Not really, all you need is Informatica on Demand! A simple and intro level absolutely free ETL tool which is running in the Cloud!

Informatica is a very reputable company in the Data Integration field. Informatica on Demand is part of their free Software as a Service (SaaS) offering. An ETL tool which is itself in the Cloud. You don't have to install any software anywhere in your infrastructure, apart from a little Informatica agent which identifies your network to the Informatica Cloud. All you do is just create a login on their website and start working! How cool is that! You login to the Informatica on Demand website and configure and even schedule your data migration and ETL tasks between your databases and the Cloud as easy as checking your email!

This free tool does schema-to-schema migrations only. It doesn't allow you to migrate by writing custom SQL on the source window and loading its result set to the target. It is limited to table-to-table migrations between separate sources and targets. You can however, save your SQL as a view in your source database and the tool will see this as a table object.

In the same Informatica on Demand website and within the same Cloud infrastructure, they also have a suite of other more advanced tools such as Data Synchronization, Data Replication and Data Assessment. This stack of tools is not free. But a 30 day trial is still possible.

Overall, I found the tool quite easy to use and with few clicks, I could transfer thousands of rows from say Oracle to Salesforce and from Microsoft SQL Server to Oracle. No need to install and configure gateways, ODBC and no need tweak any hardware locally. It also has a scheduler which works and sends emails when tasks are complete and all this is still hosted and running in The Could. So you don't have to backup anything or look after things.

Here is a snapshot of the Field Mapping screen:

Tuesday, 17 February 2009

ER modeling Tools now on the Web!

The tool is called GAE SQL Designer and is done by Jason W. Miller. It is an ER modeling tool on the web! All you need is a browser and you start modeling databases, with no installs and no extra software. You can access it directly here http://gaesql.appspot.com/

Excellent, I liked the idea very much! One thing that is missing is the ability to generate SQL from your data models. There is another less intuitive google app for that here: http://code.google.com/p/wwwsqldesigner.

Apart from that, I think Jason's GAE SQL Designer will be useful in quick prototyping and learning. Well done Jason!

Wednesday, 4 February 2009

Oracle SQL Developer for Ubuntu

http://blog.kizoom.com/2009/02/oracle-sqldeveloper-for-ubuntu-version.html

Tuesday, 13 January 2009

Blocking sessions-locks in Oracle

In this post I will introduce an SQL script, which would quickly tell you if there is blocking between the sessions and which would also show you what SQL these sessions are using.

To see the script working, first create a dummy table and insert some test data.

SQL> create table t (a char(1));

Table created.

SQL> insert into t values ('z');

1 row created.

SQL> commit;

Then select some rows from the dummy table for update.

SQL> select * from t where a='z' for update;

A

-

z

In second session try to update the rows which you have selected above. Due to locks this will block! ACID and serialization kicks in.

SQL> update t set a='x' where a='z';

It will just hung!

To see what is blocking, run this query in a third session as SYSDBA.

SELECT TO_CHAR(sysdate, 'DD-MON-YYYY HH24:MI:SS')

|| ' User '

||s1.username

|| '@'

|| s1.machine

|| ' ( SID= '

|| s1.sid

|| ' ) with the statement: '

|| sqlt2.sql_text

||' is blocking the SQL statement on '

|| s2.username

|| '@'

|| s2.machine

|| ' ( SID='

|| s2.sid

|| ' ) blocked SQL -> '

||sqlt1.sql_text AS blocking_status

FROM v$lock l1,

v$session s1 ,

v$lock l2 ,

v$session s2 ,

v$sql sqlt1 ,

v$sql sqlt2

WHERE s1.sid =l1.sid

AND s2.sid =l2.sid

AND sqlt1.sql_id= s2.sql_id

AND sqlt2.sql_id= s1.prev_sql_id

AND l1.BLOCK =1

AND l2.request > 0

AND l1.id1 = l2.id1

AND l2.id2 = l2.id2

/

BLOCKING_STATUS

--------------------------------------------------------------------------------

15-JAN-2009 07:41:27 User hero@world.com ( SID= 144 ) with the statemen

t: select * from t where a='z' for update is blocking the SQL statement on hero@world.com ( SID=147 ) blocked SQL -> update t set a='x' where a='z'

Monday, 12 January 2009

Google Chart APIs

How about if you could just pass 2-3 numbers to a URL and get back a very nice looking SVG chart on a web page for FREE and on your website?

I found out that Google now give an API where you pass few numbers in a URL and it just Charts them for your.

Try for yourself here:

http://code.google.com/apis/chart/

I taught I blog about this, as I can see uses of it in real time monitoring. They claim that you can call their API up to 250K times a day for free.

Here is a nice idea. A day has 86400 seconds, hmmm how about monitoring the load of your server with it? :-)

Create a database using OSDM (Oracle SQL Developer Modeling)

Suppose your database requirements are as simple as follows:

- You want an event management database system. Not a calendar, but something where you can write events into.

- You want to be able to invite friends to attend to these events.

- You also want your friends/users to choose the types of events they like most.

Data model it with OSDM

Using OSDM you can quickly draw the data model with foreign keys and primary keys for your database design requirements above. Is not difficult to use just filling in dialog boxes about the properties of the tables.

(Click to enlarge)

OSDM will not only draw you the model, but will also give you the ability to extract the DDL and then run it on something like Oracle Apex's SQL command prompt. How great is that! I just hope that Oracle WILL give this tool free with Oracle SQL developer in later releases.

Extract the DDL from the Model

In OSDM use File -> Export -> DDL File to extract the SQL statements from the data model.

Using the simple OSDM you can extract the DDL from the above model and you get a nice SQL file with the CREATE TABLE and ALTER TABLE ... CONSTRAINT ... commands which will look like this:

-- Generated by Oracle SQL Developer Data Modeling Version: 1.5.1 Build: 518

-- at: 2009-01-12 21:56:49

-- for: Oracle Database 10g

-- Oracle Database 10g

CREATE TABLE EVENTS

(

EVENT_ID NUMBER NOT NULL ,

EVENT_DESCRIPTION CLOB ,

EVENT_DATE DATE ,

EVENT_TYPE_ID NUMBER NOT NULL

) LOGGING

;

ALTER TABLE EVENTS

ADD CONSTRAINT EVENTS_PK PRIMARY KEY ( EVENT_ID ) ;

CREATE TABLE USERS

(

USER_ID NUMBER NOT NULL ,

USER_EMAIL VARCHAR2 ,

USER_NAME VARCHAR2

) LOGGING

;

ALTER TABLE USERS

ADD CONSTRAINT USERS_PK PRIMARY KEY ( USER_ID ) ;

CREATE TABLE ATTEND

(

USER_ID NUMBER NOT NULL ,

EVENT_ID NUMBER NOT NULL ,

ATTEND_DATE DATE

) LOGGING

;

CREATE TABLE EVENT_TYPES

(

EVENT_TYPE_ID NUMBER NOT NULL ,

EVENT_TYPE_DESCRIPTION VARCHAR2 NOT NULL

) LOGGING

;

ALTER TABLE EVENT_TYPES

ADD CONSTRAINT EVENT_TYPES_PK PRIMARY KEY ( EVENT_TYPE_ID ) ;

CREATE TABLE USER_EVENT_PREFERENCES

(

USER_ID NUMBER NOT NULL ,

EVENT_TYPE_ID NUMBER NOT NULL

) LOGGING

;

ALTER TABLE EVENTS

ADD CONSTRAINT EVENTS_EVENT_TYPES_FK FOREIGN KEY

(

EVENT_TYPE_ID

)

REFERENCES EVENT_TYPES

(

EVENT_TYPE_ID

)

NOT DEFERRABLE

;

ALTER TABLE ATTEND

ADD CONSTRAINT ATTEND_USERS_FK FOREIGN KEY

(

USER_ID

)

REFERENCES USERS

(

USER_ID

)

NOT DEFERRABLE

;

ALTER TABLE ATTEND

ADD CONSTRAINT ATTEND_EVENTS_FK FOREIGN KEY

(

EVENT_ID

)

REFERENCES EVENTS

(

EVENT_ID

)

NOT DEFERRABLE

;

ALTER TABLE USER_EVENT_PREFERENCES

ADD CONSTRAINT USER_EVENT_PREFERENCES_USERS_FK FOREIGN KEY

(

USER_ID

)

REFERENCES USERS

(

USER_ID

)

NOT DEFERRABLE

;

ALTER TABLE USER_EVENT_PREFERENCES

ADD CONSTRAINT USER_EVENT_PREFERENCES_EVENT_TYPES_FK FOREIGN KEY

(

EVENT_TYPE_ID

)

REFERENCES EVENT_TYPES

(

EVENT_TYPE_ID

)

NOT DEFERRABLE

;

-- Oracle SQL Developer Modeling Summary Report:

--

-- CREATE TABLE 5

-- CREATE INDEX 0

-- ALTER TABLE 5

-- CREATE VIEW 0

-- CREATE PROCEDURE 0

-- CREATE TRIGGER 0

-- CREATE STRUCTURED TYPE 0

-- CREATE COLLECTION TYPE 0

-- CREATE CLUSTER 0

-- CREATE CONTEXT 0

-- CREATE DATABASE 0

-- CREATE DIMENSION 0

-- CREATE DIRECTORY 0

-- CREATE DISK GROUP 0

-- CREATE ROLE 0

-- CREATE ROLLBACK SEGMENT 0

-- CREATE SEQUENCE 0

-- CREATE SNAPSHOT 0

-- CREATE SYNONYM 0

-- CREATE TABLESPACE 0

-- CREATE USER 0

--

-- DROP TABLESPACE 0

-- DROP DATABASE 0

--

-- ERRORS 0

-- WARNINGS 0

To get Oracle SQL Developer Data Modeling go to Oracle's Website here

Thursday, 8 January 2009

Oracle Apex and Ajax

I have already created my Ajax Search Page, see here http://apex.oracle.com/pls/otn/f?p=38209:fishbase, by reading instructions from an example in a book called Pro Oracle Application Express book by John Edward Scott and Scott Spendolini . A very good all-round concepts book on Oracle Apex Development.

You will need 2 pages to create the Ajax Search Page.

1. Create a new blank page in your application with a search query

You need to create a new blank page in your application and you need to use the Printer Friendly page template as it has to be minimal looking with no headers, footers or tabs. This page will also have a Report region where you will write the SQL query which the Ajax Search Page will use to search your chosen table on your chosen column. In my case this page was page7.

The SQL I used for searching the "fish" table in my application is like below

select f_name_gb "English", f_name_gr "Greek", f_latin "Latin", f_name_tr "Turkish" from fish

where

(

instr(upper(f_name_gb), upper(nvl(:P7_SEARCH, f_name_gb))) > 0

or

instr(upper(f_name_gr), upper(nvl(:P7_SEARCH, f_name_gr))) > 0

or

instr(upper(f_name_tr), upper(nvl(:P7_SEARCH, f_name_tr))) > 0

or

instr(upper(f_latin), upper(nvl(:P7_SEARCH, f_latin))) > 0

)

2. Modify the Printer Friendly Page Template you used in step 1 above

In page7 above, you will also need to change the Body section of the Printer Friendly template to use the code below, that is the template code around the tag #BOX_BODY# , should be modified like below.

<ajax:BOX_B0DY>

<div id="BOX_BODY">

#BOX_BODY##REGION_POSITION_02##REGION_POSITION_04#</div>

</ajax:BOX_B0DY></td>

Now you can run page7 and it will display all records from your table without applying the search.

Now the most important bit is the fact that we will call and display page7 with Ajax into a region in another page. That is why the use of the Printer Friendly template on page7. Because page7 will display via a <div> tag in page1. One more thing, is that page1 which calls the AjaxReport page7, must have a Search Box Text item for the search field where users will type. You must create this text field in page1. This text field is your Search Box, it is where users will type and search.

3. Do an Ajax call on the page you have just created above from another page.

When users enter text in the Search filed in page1, a call is made to the Ajax page page7 from page1 via the JavaScript function on page1, see below. The search is performed and the SQL is executed. The Javascript you will add to the Header of the calling page1 performs the Ajax call and retrieves the report from page7 and places it in the <div> tag which is in a region of page1. You will see what the region with the <div> contains in later steps.

Just include your page number in lines 5 and 6 below. As you see because my Ajax page number is 7, it has 7 and P7_SEARCH included in these lines. Modify this to reflect your page number.

<script type="text/javascript">

<!--

function AjaxReportRefresh(pThis){

var l_val = pThis.value;

var get = new htmldb_Get(null,$x('pFlowId').value,null,7);

get.add('P7_SEARCH',l_val)

gReturn = get.get(null,'<ajax:BOX_BODY>','</ajax:BOX_BODY>');

get = null;

$x('AjaxReport').innerHTML = gReturn;

return;

}

//-->

</script>

4. Create a new HTML region on page1 call it AjaxReport

Next, I created a new HTML region on page1 and called it AjaxReport. This is where the results from page7 will be displayed. The source of this region is the <div> tag :

<div id="AjaxReport"><br /></div>

5. Last Step, call the Javascript function created in step 3

Everytime something is entered into the search field P1_SEARCH on page1 the JavaScript function of step 3 will be called. To do that enter the code below to the HTML Form Element Attributes section for P1_SEARCH (search box Text field item) page item on page1.

onkeyup=" AjaxReportRefresh (this)"

You are ready to test the page, type some letters and see how the page responds by filtering records from your table as you type the letter. Enjoy!

Monday, 5 January 2009

Installing Oracle Apex on Oracle 10g R2 with EPG is not supported

My Oracle 10g R2 dev installation didn't have extra database applications and it was not ready for any kind Apex configuration not even for the supported configuration which uses an HTTP server.

Oracle Apex and Oracle 10g database requirements

http://download.oracle.com/docs/cd/E10513_01/doc/install.310/e10496/pre_require.htm#BABFCFBC

SQL> @$ORACLE_HOME/ctx/admin/catctx.sql ctxsys DRSYS TEMP NOLOCK2. Install Oracle JVM (for releases before 10g R1 (10.1) )

SQL>@$ORACLE_HOME/javavm/install/initjvm.sqlSQL>@$ORACLE_HOME/ctx/admin/catqm.sql change_on_install XDB TEMPSQL>@$ORACLE_HOME/ctx/admin/catxdbj.sql4. Install Apex

SQL>@apexins password APEX_TS APEX_TS TEMP /i/If you succeed you will get:

Thank you for installing Oracle Application Express.

Oracle Application Express is installed in the FLOWS_030000 schema.

The structure of the link to the Application Express administration services is as follows:

http://host:port/pls/apex/apex_admin

The structure of the link to the Application Express development interface is as follows:

http://host:port/pls/apex